GEPLAN – Mission-Critical Logistics

Intelligence for PEMEX (2006-)

Supporting Chicontepec—one of the most ambitious energy development programmes at the time— the programme combined multi-billion-dollar investment, projected development at massive well count, technically difficult reservoirs and a highly experimental operating environment.

This made conventional logistics insufficient. The operation required a real-time intelligence layer capable of integrating information across contractors, maintenance activity, field execution and changing production realities, so logistics could continuously adapt to specific, experimental and evolving operational conditions.

GEPLAN was designed and implemented as an integrated platform connecting logistics, maintenance, inventory, procurement, telemetry and operational estimation in a single environment.

The system included:

- Real-time vehicle tracking and satellite communications

- Logistics planning and dispatch coordination

- Workshop, preventive and predictive maintenance

- Inventory, warehouse and spare parts management

- Procurement and supplier workflows

- Consignment inventory with third-party suppliers

- Production estimation and operational analytics

- Integration with PEMEX logistics and operational processes

- Dashboards, alerts and decision-support capabilities

Unlike traditional transformation programmes, GEPLAN was implemented without major upfront CAPEX.Delivered through a PEMEX transport concessionaire, the programme started with warehouse stabilisation, inventory clean-up and basic operational controls. Each improvement generated measurable savings, which funded the next phase: purchasing, remanufacturing, workshop intelligence, predictive maintenance, logistics and finally integration with PEMEX. Positive benefits appeared within the first three months and were continuously reinvested into the next sprint.

GEPLAN eventually became more than a logistics platform. It became the operational backbone connecting transport, maintenance, field operations and decision-making across one of the most complex industrial environments in Mexico.

Download the full case study to explore:

- Technology stack and architecture

- Logistics and maintenance integration

- Warehouse and procurement model

- Predictive maintenance approach

- Zero-CAPEX delivery model

- Governance and operating model transformation

- Integration with PEMEX

- Operational intelligence and decision support

Pre-Agentic Operational Intelligence at Scale (2016-)

The xSeil Case Study

Experiencias Xcaret, one of the largest integrated tourism operators in Latin America, needed more than route planning. It needed a logistics operating system capable of coordinating seven parks, roughly 500 hotels and around 15,000 daily passengers under fixed service commitments and continuous operational change.

Download the Technical One-Pager

A concise overview of the challenge, architecture and operating logic behind xSeil.

The Challenge: Fully Committed Demand

Unlike traditional logistics environments, xSeil operated under a fully committed demand model with effectively zero flexibility.

- Blind commitments: tickets could be sold independently by resellers with no real-time capacity validation.

- Locked SLAs: pickup location and time were fixed at the moment of sale and could not be renegotiated.

- Massive complexity: for each individual reservation, the system had to evaluate around 60 million possible assignment combinations in real time, across a base of approximately 12,000 daily reservations.

- Cascade risk: in real time, every assignment change altered the conditions of the others, creating multiple cascading waves of decisions across routes, vehicles, driver schedules and subsequent service commitments.

This was not simply a routing problem. It was a live operational control problem in which punctuality, vehicle policy, fuel efficiency, transfer logic and congestion had to be balanced continuously under real-world pressure.

The Platform: xSeil as a Logistics Operating System

xSeil was architected not as a narrow VRP tool, but as a full logistics operating platform covering the entire transport lifecycle.

It integrated:

- planning and optimisation;

- fleet availability and maintenance readiness;

- rental estimation and third-party capacity;

- real-time control and dynamic reassignment;

- transfer centre coordination;

- middleware and data normalisation;

- mobile execution and field feedback;

- managerial decision support.

Built on a hybrid stack combining Nutanix, VMware, bare-metal GPU infrastructure, multithreaded processing, IOT & Edge computing architecture, Redis caching, Python, Cython and PostgreSQL, the platform combined optimization engines, middleware, memory layers and execution services into a closed-loop control model between planning and live operations.

The Operating Logic: Pre-Agentic Intelligence

To manage NP-complex logistics, xSeil did not pursue a theoretical global optimum. Instead, it used a stability-driven operational model designed for tractability under live conditions.

Its logic included:

- Pre-Agentic Allocation Logic

Passenger assignments behaved as implicit decision units competing for limited resources such as punctuality, capacity, route quality and vehicle policy, while compatible passengers were grouped cooperatively to reduce complexity and improve stability. - Dynamic Utility and Internal Pricing

Objectives such as punctuality, fuel efficiency, transfers and route simplicity were reprioritised continuously according to system state, using dynamic internal “pricing” rather than fixed weights. - “Good Enough” Execution Thresholds

Instead of pursuing a theoretical optimum, the platform stopped searching once a safe and sufficiently high-utility solution was reached, balancing quality, speed and computational effort. - Fragility-Based Capacity Protection

When anomaly risk and propagation risk increased, the platform shifted from optimisation to protection by introducing additional slack, rental units, buffers or spare capacity. - Stability Partitioning

The network was divided into low-propagation clusters so that stable decisions could be locked early and the active search space reduced step by step. - Decision Memory

The platform reused successful route patterns, allocation structures and cluster configurations, replacing blind combinatorial search with informed retrieval and local adjustment.

This made xSeil operationally effective in conditions where brute-force optimisation alone would have been too slow or too fragile.

Download the Technical One-Pager

Go deeper into the engineering principles behind xSeil, including:

- the operating challenge;

- architecture overview;

- logistics platform design;

- pre-agentic allocation logic;

- dynamic prioritisation and fragility management;

- why the case remains relevant for mission-critical systems today.

Shared Mobility Intelligence at Scale

The Car Evolution Case Study (2019 – )

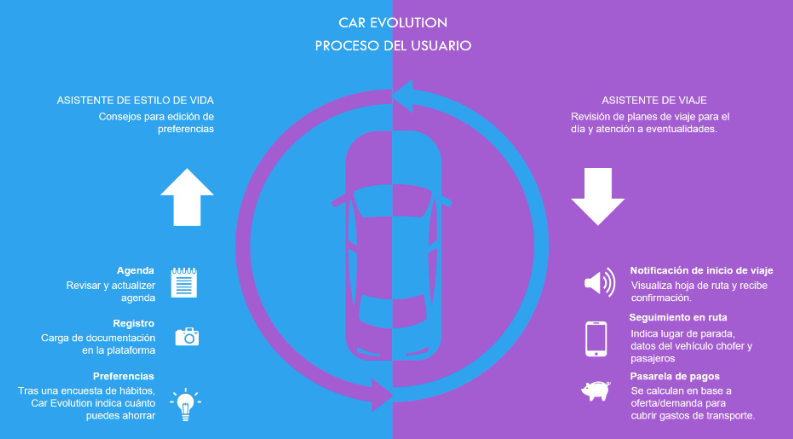

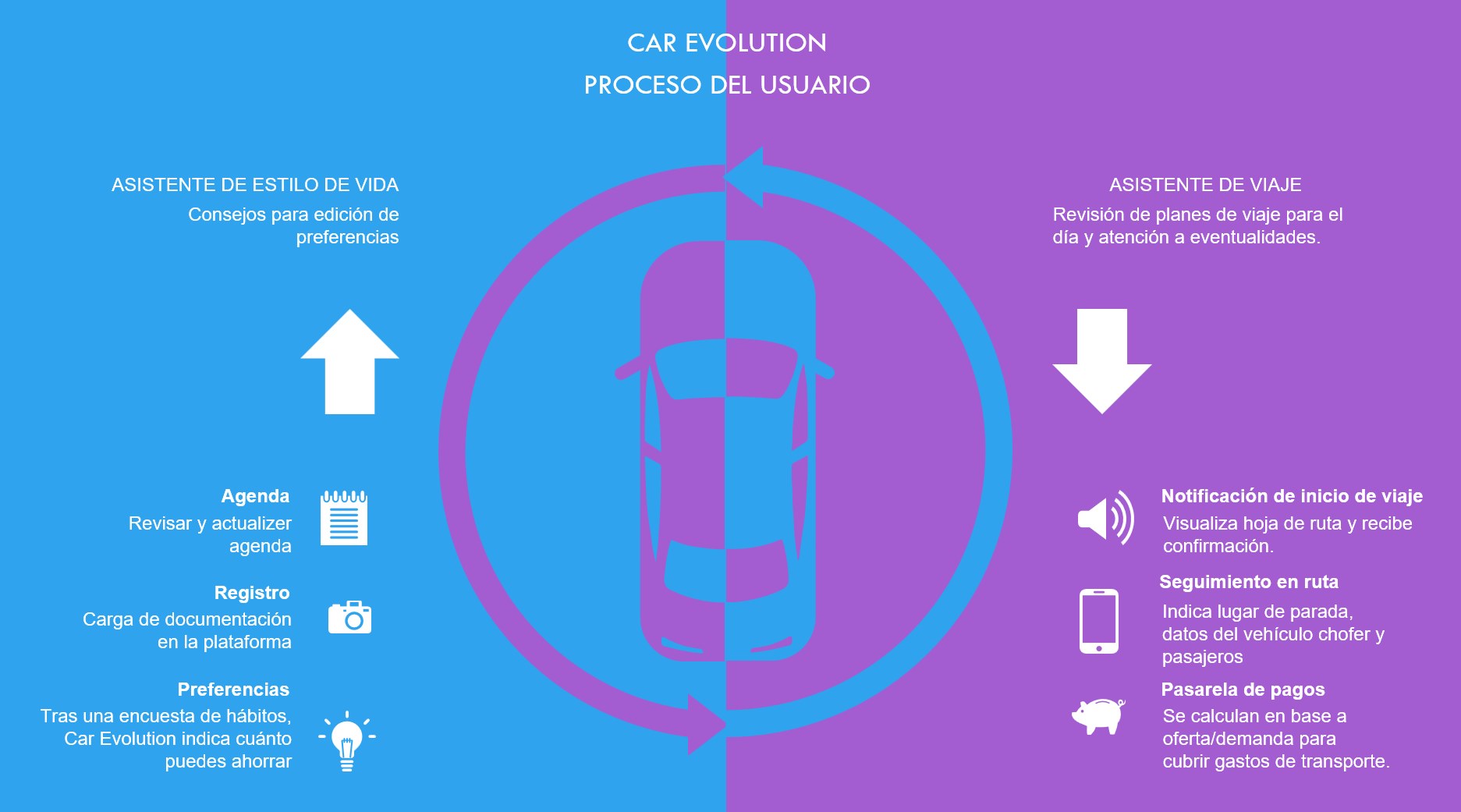

Car Evolution was conceived as a proof of concept for a new generation of intelligent shared mobility platforms.

Built on the operational logic previously validated through xSeil, the concept explored how advanced logistics intelligence could be applied to urban mobility, ride sharing and car pooling at scale.

Rather than acting as a simple ride-sharing marketplace, Car Evolution was designed as a dynamic planning engine capable of coordinating thousands of users, vehicles, preferences and daily agenda changes in real time.

The objective was to reduce transport costs, improve vehicle utilisation, lower CO2 emissions and create a more resilient mobility network.

The Challenge: Dynamic Mobility Under Constant Change

Traditional ride-sharing and car-pooling platforms depend heavily on manual decisions by users: publishing trips, selecting passengers, negotiating stops and reacting to changes individually.

Car Evolution explored a different model.

The platform would continuously analyse:

- user agendas and recurring mobility patterns;

- driver and passenger preferences;

- availability of private and shared vehicles;

- traffic congestion and route conditions;

- service reliability and punctuality commitments;

- environmental policies and CO2 objectives;

- demand peaks and future capacity requirements.

This created a highly complex decision environment where the platform had to constantly rebalance thousands of concurrent trips while preserving reliability and user trust.

The Platform Vision

Car Evolution was designed as an intelligent mobility operating platform rather than a traditional booking application.

It combined:

- shared mobility planning;

- driver-passenger matching;

- dynamic route optimisation;

- automated travel plans;

- intelligent compensation mechanisms;

- predictive demand estimation;

- subscription-based mobility models;

- contingency planning for disruptions.

The platform could recommend whether a user should drive, share a vehicle, receive compensation for additional travel time, or use a chauffeur-style service depending on cost, demand and personal preferences.

Its operating model also included future capabilities such as:

- dynamic pricing;

- smart contracts between participants;

- integration with third-party providers such as taxi, Uber or Cabify;

- public-sector dashboards for mobility policy simulation;

- scenario comparison between punctuality, cost and CO2 reduction.

Pre-Agentic Mobility Intelligence

At its core, Car Evolution reused the same decision logic previously validated in large-scale logistics environments.

The platform treated each trip request as an implicit decision unit competing for limited resources such as route quality, travel time, cost, vehicle type and punctuality.

Compatible users were grouped collaboratively to reduce complexity and increase overall system efficiency.

The platform also used:

- dynamic utility and internal pricing;

- “good enough” execution thresholds;

- fragility-based capacity protection;

- low-propagation clustering;

- decision memory and reuse of successful mobility patterns.

This made it possible to address a problem that is extremely difficult to scale in practice: continuously re-planning a large mobility network in real time when users change agendas, vehicles fail, traffic increases or new requests appear.

Potential Impact

Although developed as a proof of concept, Car Evolution anticipated many of the mobility challenges that are now becoming mainstream:

- shared mobility;

- subscription-based vehicles;

- sustainability and CO2 reduction;

- intelligent transport orchestration;

- dynamic ride sharing;

- AI-driven travel planning.

The concept demonstrated how a logistics platform originally designed for mission-critical operations could evolve into a broader mobility intelligence platform with potential applications across smart cities, corporate mobility, tourism, airports and regional transport systems.

Car Evolution showed that the future of mobility is not only about more vehicles or more transport providers. It is about better orchestration, better prediction and better decision-making across the entire network.

Source material: xSeil had previously been validated in Riviera Maya for more than 12,000 passengers per day, and Car Evolution was conceived as the next step towards intelligent car-pooling and shared mobility using the same underlying allocation logic and AI principles.

Liquidity Control Under Regime Change

The Phylons Neural Network Case Study

Phylons began as a liquidity-control and market-regime project, but evolved into something broader: an explainable architecture for detecting when a complex system is beginning to lose stability.

The original challenge was not simply to build a wallet, a payment layer or a predictive engine. It was to understand how liquidity stress emerges, how it propagates, and how to intervene before a local imbalance becomes a wider systemic problem.

In practice, the objective was to answer a more difficult question:

How much structural buffer is required to preserve stability when the environment is becoming more fragile?

1. The Problem

At a structural level, the liquidity problem turned out to be mathematically very similar to the transport and logistics work developed previously.

Vehicle capacity became financial liquidity.

Passenger assignments became liquidity allocations.

Routing constraints became transfer, settlement and reserve constraints.

Buffer allocation became liquidity reserve management.

The problem was therefore not a payment problem, but a constrained resource-allocation problem under uncertainty.

Like transport, the system had to balance:

- short-term utilization;

- reserve preservation;

- propagation risk;

- changing operating conditions;

- local shocks that could spread across the wider network.

The difficulty was even greater than in transport because the relevant signals were not directly observable.

Liquidity stress is rarely visible in a clean way. It has to be inferred from fragmented market data, transaction patterns, timing mismatches, reserve pressure, execution delays and indirect indicators of instability.

This required extensive work in:

- data acquisition;

- signal discovery;

- machine learning;

- first-principles modelling;

- identification of non-obvious predictive factors.

Over time, the work moved away from trying to predict exact future states.

The real objective became more practical and more robust:

- detect when the current regime is weakening;

- identify early signs of instability;

- adjust reserves and buffers before visible failure occurs;

- preserve explainability and operational control.

Rather than asking “what exactly will happen?”, the system focused on “is the current state becoming more fragile?”

2. The Phylons Architecture

To solve this problem, the project evolved into a Phylon Neural Network.

The name Phylon comes from the Greek word for family, race, lineage or tribe.

The concept reflects the way the architecture works: individual signals can be grouped into larger “families” of related behaviours, and those families can in turn combine into higher-order structures.

A Phylon is therefore not just a feature or a neuron.

It is a meaningful behavioural unit with:

- a specific role;

- explicit inputs and outputs;

- measurable contribution;

- identifiable relationships with other Phylons;

- the ability to be combined, replicated, weakened, strengthened or removed.

Unlike conventional deep learning, where internal representations are often opaque, the Phylons architecture was designed to remain visible and explainable.

Every important signal, combination and decision path could be traced.

Expanded Signal Space

The architecture began by expanding the signal space far beyond traditional early-warning indicators.

Hundreds of candidate predictors were engineered to capture:

- local stress;

- delay accumulation;

- coupling between elements;

- propagation potential;

- asymmetries;

- structural imbalance;

- hidden fragility.

Most signals were weak when taken individually.

The value emerged when they were combined.

Hierarchical Combinatorial Logic

The architecture progressively grouped signals into stronger and more robust structures:

- individual predictors;

- combinations of predictors;

- combinations of combinations;

- higher-order behavioural “families”.

This created a system that behaved like a deep neural network, but with explicit structure and explainable relationships.

The objective was not to create a black box, but to create a hierarchy of interpretable building blocks.

Stability Through Discretization

To reduce noise and improve robustness, signals were transformed into ranges rather than treated only as continuous values.

This improved:

- stability under regime change;

- interpretability;

- resistance to overfitting;

- reliability in low-data environments.

Optimization and Pruning

Because the combinatorial space became extremely large, a major engineering effort was required to keep the architecture computationally tractable.

Custom optimization and filtering mechanisms were developed to:

- evaluate large numbers of candidate Phylons;

- remove unstable combinations;

- eliminate redundancy;

- retain only combinations that improved robustness.

This allowed the architecture to operate with hundreds or thousands of candidate signals while remaining practical in production.

Selective Adaptation Under Regime Change

One of the strongest aspects of the architecture is that every Phylon preserves its own “recipe” as part of its identity.

Each one retains:

- its internal structure;

- thresholds;

- weights;

- dependencies;

- activation logic;

- contextual relevance.

This means the system can identify:

- which Phylons remain valid under a new regime;

- which ones should be weakened or removed;

- which combinations should be strengthened;

- which new structures should be explored.

Instead of retraining a monolithic model from scratch, the system can selectively adapt while preserving what still works.

That makes the architecture especially relevant for unstable, nonlinear and fast-changing environments.

Why the Case Matters

Although initially developed in a financial context, the Phylons architecture confirmed something broader.

The same logic can be applied across different classes of constrained systems:

- transport and logistics;

- liquidity and financial control;

- enterprise applications and process networks;

- supply chains;

- industrial operations;

- mission-critical infrastructure.

What changes from one domain to another is not the mathematical core.

Only the interpretation changes:

- capacity becomes liquidity;

- liquidity becomes functional capacity;

- routes become process flows;

- reserves become buffers;

- local shocks become propagation risk.

The core problem remains the same:

How to preserve stability when local disturbances can spread faster than centralized control can react.

Phylons was important because it demonstrated that this problem can be approached through an explainable, modular and regime-aware architecture rather than through a purely opaque predictive model.